The New Meaning of 'Developer'

March 12 2026

By John Mannes

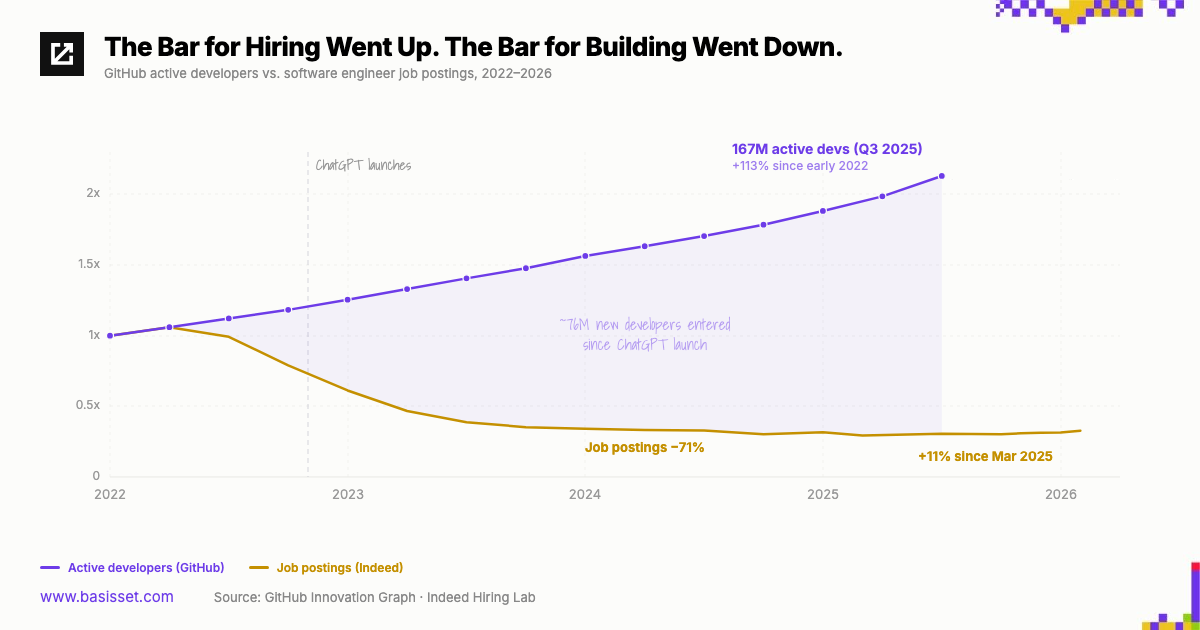

There are twice as many developers as there were three years ago. There are a third as many job postings.

Since early 2022, active developers on GitHub have nearly doubled — from 90 million to 167 million. Over the same period, software engineering job postings on Indeed dropped 71%. The lines crossed right after ChatGPT launched.

The conventional narrative is straightforward: AI is replacing developers. Another version of the same story says the opposite — AI is creating developers, everyone is learning to code, and the market is flooded. There's a live version of this debate playing out right now — Citadel Securities' "Global Intelligence Crisis" report recently pointed to recovering job postings as evidence that software engineering demand is back. The doom crowd says SWE is over.

I don't think either framing is right. Not because the data is wrong, but because both treat "developer" as a fixed category. I think the more interesting question is whether the word itself is about to stop meaning what we think it means.

I'm one of those new GitHub users. I'm not a developer. But I have GitHub Actions running, data pipelines stored in repos, AI agents operating out of my codebase. I didn't learn to code. I just started building things, and the tools met me where I was.

"Computer" used to mean a person. Through the early 20th century, a computer was someone who performed calculations by hand. Then we built machines that did the same work, and the word migrated from the person to the device. The human computers didn't disappear. The word just stopped describing them.

"Developer" is going through something similar. For decades, it's meant someone who writes code — who speaks the machine's language to make it do things. But that definition was never stable. In the 1960s, a developer was someone who wired circuits or punched cards. In the '90s, it meant someone who could build a website. In 2015, it meant someone fluent in modern frameworks and cloud infrastructure. The word kept its shape while the substance underneath completely changed.

For most of computing history, the only way to interact with a computer was to program it. There was no "using" a computer — every interaction was development by definition. Then the personal computer created a split. The GUI made it possible to interact with a machine without writing instructions, and a whole set of activities that were previously programming got reclassified as "just using." The definition of "developer" moved up to whatever was still hard.

That split shaped more than job titles, it shaped how we think about computation itself. The personal computer became so dominant that we started defining the entire concept of computing through it — what a computer can do became the boundary of what we imagined was computable. Some people define AGI that way — can an AI do everything a human does on a computer? As if the computer is the ceiling.

But computation was always bigger than the computer, and development was always bigger than code. A loom takes patterned input and produces structured output. A city takes policy, resources, and people and processes them into traffic patterns, economic activity, and culture. An immune system identifies threats, generates candidate solutions, tests them, and remembers what works. These are all computation.

Even within digital computation, complex work has been leaving your device for two decades. The cloud started it. Mobile accelerated it. AI widened the gap further — the inference behind tools like Claude requires hardware no individual could own. Apple sells a $599 Mac now, unthinkable a decade ago. That's not in spite of the explosion in computational capability — it's because of it. Computation outgrew our computers. And yet the computer is still the framework through which we define development.

So what does "developer" mean in five years? I think it expands far beyond code. If computation is the general-purpose activity, designing systems that take inputs, process them, and produce outcomes, then a developer is anyone who does that intentionally, on any system. The word either grows to absorb that broader meaning or it becomes as quaint as "typist."

It looks like development is over because we've "solved" the personal computer. But the personal computer was never computing — it was one narrow expression of it. So the goalpost moves. Everyone becomes a developer in the old sense, the same way everyone who uses Excel would have been a developer fifty years ago. But the frontier moves to computation that extends beyond the frameworks we've been confined to for the last century.