April 23 2026

By Chang Xu

In a companion post, I argued that the agent era structurally favors open source. Open models are closing the gap on frontier models. Open harnesses are gaining share. Cost economics and controllability make open source an increasingly attractive option for the next wave of software factories and agentic products. The data is clear.

But what does that mean for investors? Where does value accrue in a world where both open and closed win, and the overall pie is massive?

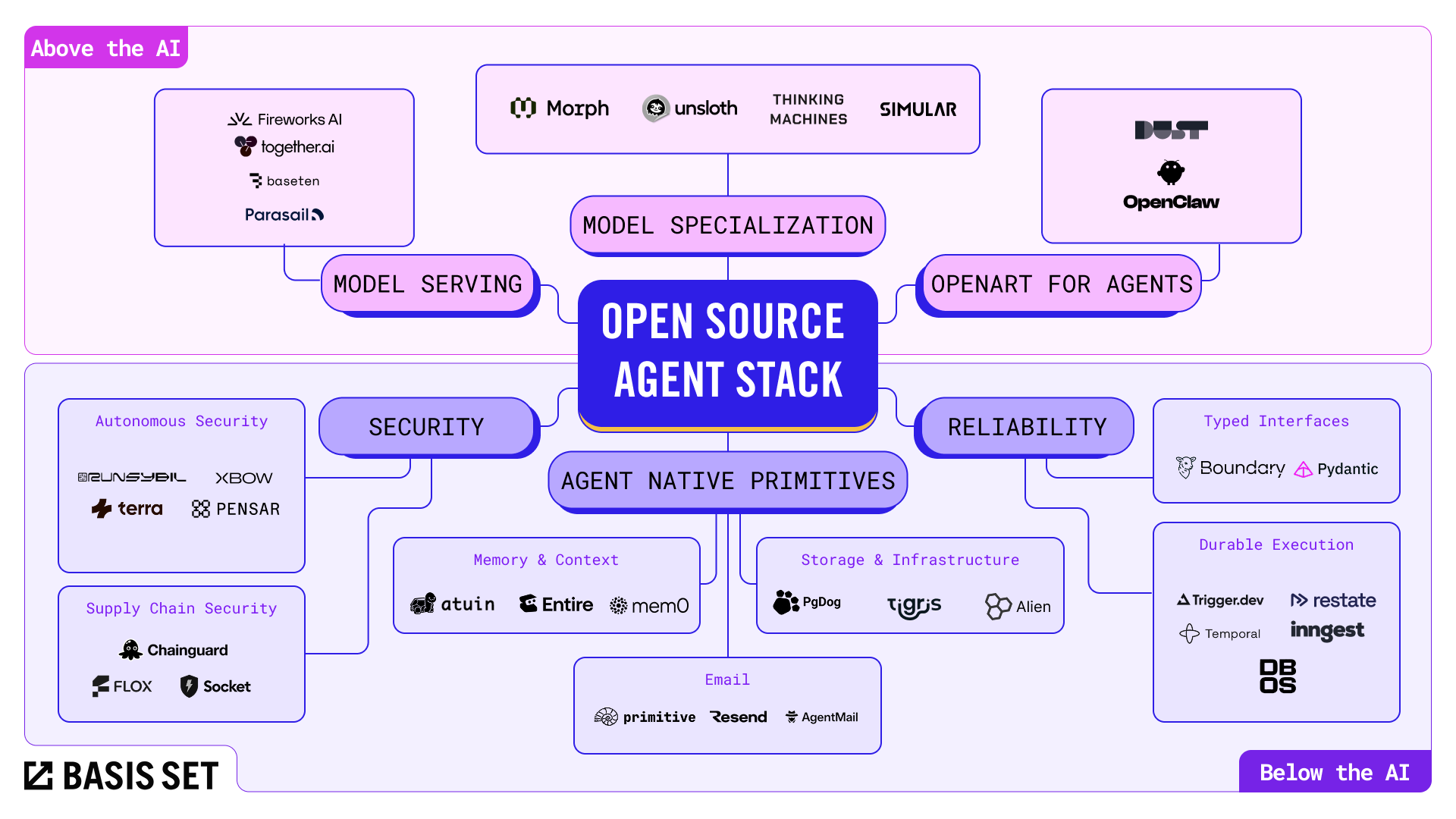

I think about the opportunity in two layers: the infrastructure beneath the AI, and the products built on top of it.

Below the AI is the infrastructure that every agent stack needs regardless of whether you use Claude Code or your own open-source harness, regardless of whether you run Opus or Qwen. These are the companies solving problems that LLMs themselves cannot solve.

LLMs are extraordinary at generating code. They are not good at generating operational excellence. Software like Temporal, battle-tested at Netflix, Datadog, and OpenAI, carries hard-won reliability that no LLM can synthesize from training data. That operational depth is what makes below-the-AI companies defensible.

Anyone can now vibe-code an agent that queries your database, installs unvetted packages, and executes arbitrary code. AI-generated code contains 2.74x more vulnerabilities than human-written code. The Q1 2026 evidence is alarming: the LiteLLM supply chain compromise hit 36% of cloud environments in two hours, the Axios npm package was backdoored by a North Korean state actor, the ClawHavoc campaign planted over 1,000 malicious skills on ClawHub, and slopsquatting exploits the fact that vibe-coded software skips human verification entirely. When agents install dependencies and execute code at machine speed with zero human review, security becomes existential.

Autonomous and continuous security addresses the expanding attack surface: XBOW ($120M Series C, $1B+ valuation) builds autonomous pentesting agents. Terra Security ($38M, Felicis) and RunSybil ($40M, Khosla, founded by OpenAI's first security hire) build agentic offensive security platforms. Pensar is taking a bottom-up approach with Apex, their open-source pentesting agent, while their commercial platform provides continuous AI-native security scanning.

Supply chain security is becoming equally critical as agents aggressively pull in dependencies: Socket ($65M) detects and blocks over 100 supply chain attacks per week, Chainguard secures agent skills from community registries with reconciliation agents and audit trails, and Flox ($25M) provides deterministic environments with complete SBOMs so agents cannot introduce environment drift.

Open-source models and harnesses are "good enough" for many tasks. The reliability gap is where value concentrates. This spans several sub-categories that share a common thread: taking open-source agent workflows from demo to production.

Typed interfaces and structured outputs. Agents produce non-deterministic outputs. Downstream systems need predictable, validated, typed data. BoundaryML created BAML, an open-source language for building type-safe AI applications with automatic parallelization and streaming with partial type guarantees mid-stream. Instructor, Outlines, and Pydantic AI also tackle structured output from different angles.

Durable execution. Agents are expanding to always-on, multi-day workflows, but infrastructure for durable, observable, resumable, and safe agent operations is still maturing. Temporal raised $300M at a $5B valuation, with OpenAI, Netflix, and Datadog as customers. Inngest raised $21M explicitly targeting AI agent reliability, with 32,000 weekly CLI downloads growing 35x year-over-year. Trigger.dev ($20M total) evolved from background jobs into a full AI agent runtime. DBOS brings database-backed durable execution with a recent Databricks partnership for agentic reliability. Restate ($7M seed from Redpoint, founded by Apache Flink creator Stephan Ewen) reimagines durable execution as a general-purpose distributed programming model.

Many developer primitives were designed for humans. Agents have fundamentally different constraints: they need programmatic interfaces, isolation guarantees, and context persistence that human-era tools never considered. A new generation of companies is rebuilding these primitives from the ground up.

Memory and context. Agents become dramatically more valuable when they remember users, projects, and prior state without brute-forcing everything into the context window. Mem0* is building the universal memory layer for AI agents, used by over 100,000 developers, working with any model and any harness. Entire*, founded by former GitHub CEO Thomas Dohmke with a $60M seed round, captures the full context of agent-generated code: prompts, reasoning, decisions, and tool calls, not just diffs. Atuin (29,000+ GitHub stars) replaces traditional shell history with a SQLite-backed contextual database with encrypted cross-machine sync.

Email. Email is the natural communication layer for agents interacting with humans and the outside world. Primitive.dev built email infrastructure for agents with a custom SMTP layer, delivering inbound events to webhooks. AgentMail ($6M seed, General Catalyst) gives agents their own inboxes with two-way threading. Resend ($18M Series A) provides a modern transactional API with first-class React Email support for 1M+ developers.

Storage and infrastructure. Agent workloads demand isolated data clones, branching, and high-throughput object access without egress penalties. Tigris Data* offers purpose-built object storage with copy-on-write bucket forking. As vertical AI agents increasingly touch sensitive customer data, BYOC (Bring Your Own Cloud) infrastructure is becoming essential, and Alien lets SaaS vendors deploy managed applications inside customer clouds with zero-inbound networking. PgDog scales Postgres databases through transparent sharding and connection pooling, since a single agent can issue orders of magnitude more queries per session than a human user.

Above the AI is the application layer: products that combine models, harnesses, and user experience to own a particular workflow or audience.

We have seen this movie before. The creative AI space has always been multi-model and multi-modal, from Stable Diffusion to ByteDance's Seedance for video generation, Kuaishou's Kling for cinematic content, Reve*'s model for controllable image editing, and Beeble*'s SwitchX for controllable video relighting. No single model dominates every use case. The ecosystem and application layer became the real moat. The same pattern is emerging in agents.

OpenArt* is a leading multi-model creative AI platform integrating every major generation engine through an intuitive interface. They grew to over 8 million monthly active users and $70M+ in ARR with a 20-person team. OpenArt became the preferred tool for AI-native creators not by building the best model, but by offering the broadest capability across all models and workflows.

The same design pattern will win in agents. The "OpenArt for agents" are products that aggregate open-source models and harnesses, provide sensible defaults and an approachable UX, and make it trivially easy for non-expert users to benefit from the multi-model, multi-harness world. The value is delivering delightful out-of-the-box experiences: breadth of capability, ease of use, model aggregation, and community ecosystem. The OpenClaw ecosystem, with 5,700+ community skills on ClawHub, is one early signal. Dust, which enables no-code agent creation for business teams and drives 70%+ weekly AI adoption at customers like Clay and Qonto, is rapidly gaining traction.

On the other end of the market sits the enterprise opportunity. Red Hat built a $3.4B revenue business before its IBM acquisition by solving a specific problem: Linux was free and powerful, but enterprises needed packaging, support, security patches, compliance certification, and SLAs before they could adopt it. Red Hat made Linux enterprise-ready.

The open-source agent stack faces the same adoption gap. The models are available. The harnesses are proliferating. Enterprises see a bewildering array of choices and need someone to put it all together.

Model serving at scale. Several startups have seen explosive growth serving open-source models. Fireworks AI ($250M Series C, $4B valuation) processes 10+ trillion tokens per day. Together AI ($305M Series B, $3.3B valuation) provides the fastest serverless inference with enterprise-grade SLAs. Baseten ($150M Series D, $2.15B valuation) delivers production-grade inference with best-in-class latency and custom model deployment. Parasail* ($22M Series A) offers scalable and reliable low-cost managed inference with multi-cloud GPU orchestration.

Model specialization. As open models proliferate, a parallel category is emerging around making them better for specific workloads. Unsloth enables 2x faster fine-tuning on consumer GPUs with 60% less memory. Thinking Machines Lab*'s Tinker API provides flexible fine-tuning across methods for models up to 1T parameters. Morph builds specialized subagent models that handle specific coding primitives at dramatically higher efficiency. Simular* builds best agent harness for computer use models.

Beyond horizontal platforms, vertical AI agents are capturing value in specific industries, which is a whole space that we will not go into here.

Enterprises will pay for integration, support, compliance, and peace of mind, even though the underlying technology is free. Just as Red Hat proved, that is an enormous business.

Below the AI and above the AI are not separate bets. They reinforce each other. As more companies adopt open-source agents (above the AI), the demand for security, reliability, memory, and primitives (below the AI) grows. As below-the-AI infrastructure matures, it lowers the barrier for more application-layer products to reach production quality.

This is the flywheel. Open-source models and harnesses commoditize the foundation. Application-layer companies specialize for audiences. Infrastructure companies make the whole stack reliable. Each layer accelerates the others.

The agent era is creating an enormous new market. The companies that win will be the ones that solve the hardest problems at each layer of the stack, for both open and closed ecosystems, with the kind of operational depth that no LLM can generate from scratch.

If you are building at any layer of this stack, I would love to hear what you are working on.

Basis Set Ventures is a proud investor in Beeble, Entire, Mem0, OpenArt, Parasail, Reve, Simular, Thinking Machines Lab, and Tigris Data.